Many companies integrate AI. Few design systems that truly work with AI. This article explains the difference between agent and multi-agent architectures — and why being AI-Ready is not about APIs, but about semantic design, orchestration, and architectural principles. If you are building AI-powered products, this distinction matters.

Almost everyone is using AI at the moment.

LLMs are being integrated. Chat interfaces are being added. Systems generate SQL, write reports, and summarise data.

So far, so good. However, there is a fundamental confusion I keep encountering:

Using AI is not the same thing as designing a system that can genuinely work with AI.

To clarify this distinction, we need to define three concepts: Agent, Multi-Agent, and AI-Ready.

An agent is essentially a decision-making mechanism that performs a specific task. It can analyse text, generate a query, or recommend an action. When equipped with well-defined tools, it can be highly efficient.

Today, many systems operate at this level. There is a single agent with access to certain tools, and it executes the given task.

For simple and well-defined problems, this is entirely sufficient.

But as processes become more complex, a single-step decision mechanism begins to struggle.

Real-world processes are rarely linear. They are multi-step and feedback-driven. Data is collected in one place, analysed in another, evaluated elsewhere, and if necessary, the plan is revised.

This is where multi-agent systems come into play.

The process can be restructured dynamically when needed. The critical factor here is not the number of agents — it is the orchestration.

For a multi-agent system to function effectively, it requires an orchestration layer. This layer determines:

In some scenarios, a single powerful agent is enough. However, as decision points increase and processes become more dynamic, a multi-agent approach becomes more scalable.

Many organisations say:

“We have APIs. We have REST services. We provide OpenAPI documentation. AI can call our services.”

Technically, that may be true.

But that does not mean the system is AI-Ready.

Let’s consider a simple example:

“Analyse sales performance, identify at-risk customers, and produce a summary.”

In traditional integrations, the calling system already knows:

The flow is predefined and deterministic.

In LLM-based systems, however, you often provide only the objective.

The model must decide:

The task flow is formed dynamically.

If the system has not been designed to accommodate this dynamic behaviour, then technically AI may be integrated — but the system is not AI-Ready.

To be AI-Ready, a system requires several foundational elements:

Tools and services must not only be callable — they must describe what they do in a way that is machine-understandable (this is crucial).

Input-output structures, business context, and expected impact must be explicit.

Providing a list of endpoints is not enough.

You need a layer of meaning.

And this meaning should not rely on internal business jargon alone — it must be described in a way an AI system can interpret. A term like “membership fee” or “dues”, for instance, can vary significantly depending on the domain.

LLMs operate on concepts, not table names.

If the domain model is invisible, and if the data schema does not align with business concepts, the system may be technically accessible but semantically meaningless to AI.

An LLM cannot infer that tbl_cst_trx_01 actually represents “Customer Sales Transactions”.

In an AI-Ready system:

If a “risk definition” exists only as embedded backend code, the LLM cannot reason about it.

Business rules should be modelled in a way that makes them:

Working with uncertainty does not mean working without control.

Authorisation boundaries, data access policies, and contextual tool usage rules must be clearly defined.

Dynamism and control should be designed together — not treated as opposing forces.

In my view, the correct sequence is this:

When done the other way around, what usually happens is this:

The system appears to work.

But as complexity grows, fragility increases.

That is AI integration.

It is not AI architecture.

An integration-ready system is designed for predefined, deterministic workflows. It assumes prior developer knowledge.

An AI-Ready system, by contrast, is modelled to respond to goal-based requests. It is discoverable, semantically meaningful, open to dynamic task flows — yet controlled and observable.

AI is not merely a feature.

It is an architectural principle.

If your system is not AI-Ready, you may be using AI — but you are not truly building a system that works with AI.

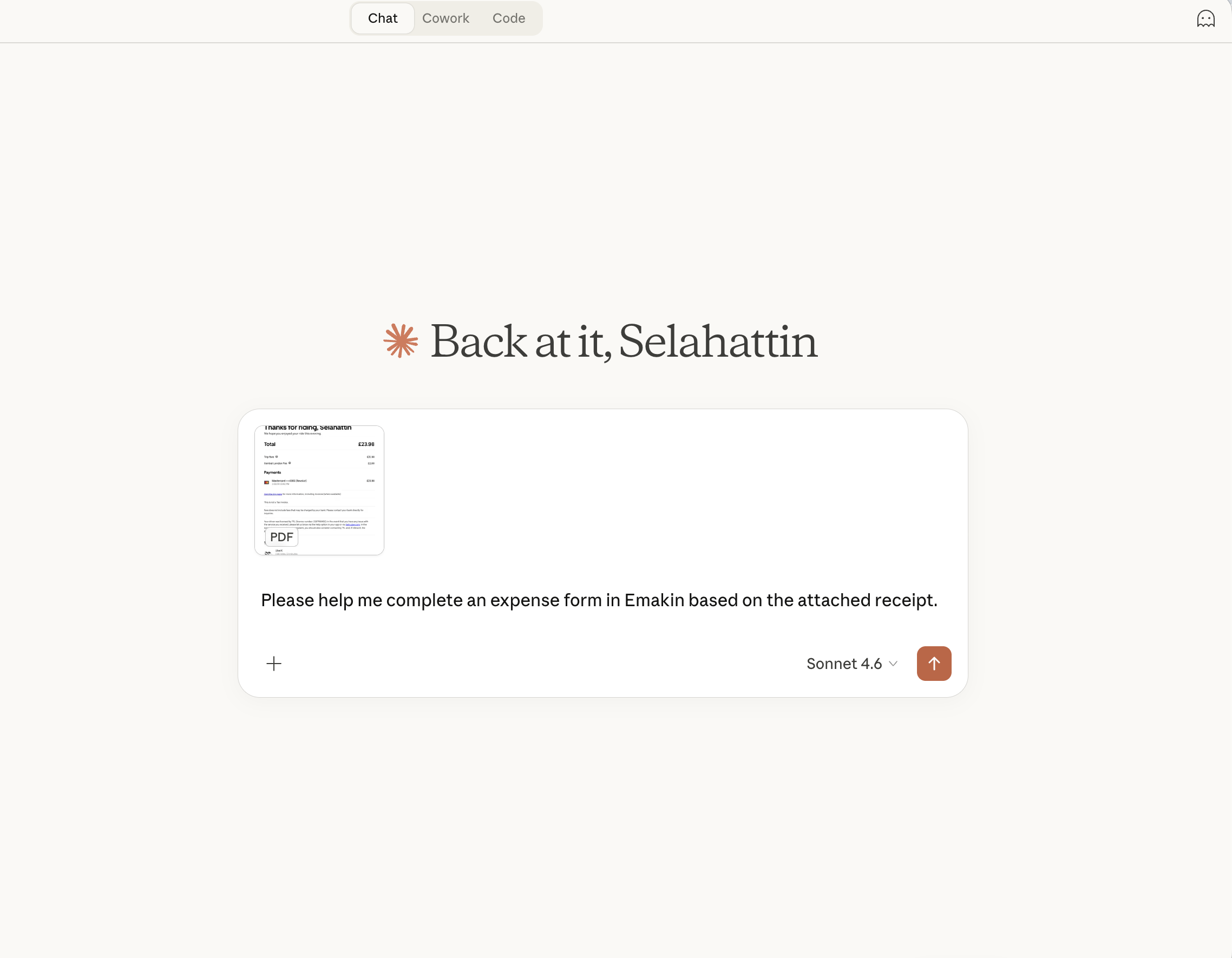

Oh, and before I forget —

Emakin 9.0 is on its way.

Insights on process automation, product updates, and what's happening at Emakin

Süreç yönetimi, ürün geliştirmeleri ve Emakin'deki güncel gelişmeler